Prep: Things to do before we get started.

Open these slides: https://tampa.bretfisher.com/

Get a server: I provisioned one for each. Ask me for the IPs.

Access your server over SSH

ssh docker@w.x.y.zor WebSSH (http://w.x.y.z:8080)- username: docker | password: training

Let me know if you can't get in, we have multiple backup options!

Note

This is hands on. You'll want to do most of these commands with me.

These slides are take-home.

Introductions

Hello! I'm Bret Fisher (@bretfisher), a fan of 🐳 🏖 🥃 👾 ✈️ 🐶

I'm a DevOps Consultant+Trainer (300k students), OSS maintainer, and Docker Captain.

👉 Watch: My weekly cloud native DevOps live show with guests. Join us on Thursdays!

👉 Listen: That show turns into a podcast called "DevOps and Docker Talk."

👉 Read: You can get my weekly updates in my Newsletter.

👉 Chat: Join 12k DevOps pros in my Discord server devops.fan logistics-bret.md

Accessing these slides now

We recommend that you open these slides in your browser:

This is a public URL, you're welcome to share it with others!

Use arrows to move to next/previous slide

(up, down, left, right, page up, page down)

Type a slide number + ENTER to go to that slide

The slide number is also visible in the URL bar

(e.g. .../#123 for slide 123)

These slides are open source

The sources of these slides are available in a public GitHub repository:

These slides are written in Markdown

You are welcome to share, re-use, re-mix these slides

Typos? Mistakes? Questions? Feel free to hover over the bottom of the slide ...

👇 Try it! The source file will be shown and you can view it on GitHub and fork and edit it.

Accessing these slides later

Slides will remain online so you can review them later if needed

(let's say we'll keep them online at least 1 year, how about that?)

You can download the slides using that URL:

https://tampa.bretfisher.com/slides.zip

(then open the file

docker.yml.html)You can also generate a PDF of the slides

(by printing them to a file; but be patient with your browser!)

These slides are constantly updated

Extra details

This slide has a little magnifying glass in the top left corner

This magnifying glass indicates slides that provide extra details

Feel free to skip them if:

you are in a hurry

you are new to this and want to avoid cognitive overload

you want only the most essential information

You can review these slides another time if you want, they'll be waiting for you ☺

Part 3

- (Extra Docker content)

- Tips for efficient Dockerfiles

- Dockerfile examples

- Reducing image size

- Multi-stage builds

- Exercise — writing better Dockerfiles

- Getting inside a container

- Restarting and attaching to containers

- Naming and inspecting containers

- Labels

- Advanced Dockerfile Syntax

- Container network drivers

(auto-generated TOC)

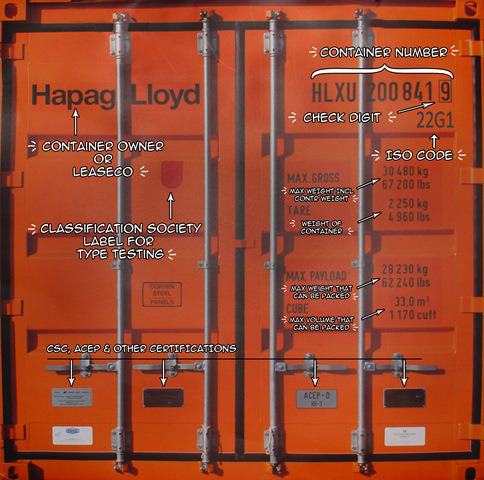

What is Docker, the idea?

Docker Inc makes many tools to build, deploy, and run containers.

They invented the modern way to run "containers". (previously jails, chroot, zones, etc.)

What is Docker, the idea?

Docker Inc makes many tools to build, deploy, and run containers.

They invented the modern way to run "containers". (previously jails, chroot, zones, etc.)

Their original 2013 ideas are now an industry standard called OCI.

Those standards are now used by hundreds of tools in the Cloud Native computing.

What is Docker, the idea?

Docker Inc makes many tools to build, deploy, and run containers.

They invented the modern way to run "containers". (previously jails, chroot, zones, etc.)

Their original 2013 ideas are now an industry standard called OCI.

Those standards are now used by hundreds of tools in the Cloud Native computing.

The three innovations are:

- Combine your app and all its dependencies in a container image

- Move that image around with registries

- Run that image anywhere in a container

I wrote a big article around this with lots of details. Bookmark for later!

What is Docker Inc., the company?

Docker Inc was quicly formed after Docker the tool/project was created in 2013.

Previously, Docker Inc. focused on Dev and Ops tooling (2013-2019).

What is Docker Inc., the company?

Docker Inc was quicly formed after Docker the tool/project was created in 2013.

Previously, Docker Inc. focused on Dev and Ops tooling (2013-2019).

In 2019 they sold 2/3rd of company and Enterprise-focused software to Mirantis.

Now they are (finally) successful focusing on Dev tooling.

What is Docker Inc., the company?

Docker Inc was quicly formed after Docker the tool/project was created in 2013.

Previously, Docker Inc. focused on Dev and Ops tooling (2013-2019).

In 2019 they sold 2/3rd of company and Enterprise-focused software to Mirantis.

Now they are (finally) successful focusing on Dev tooling.

Docker Subscription includes:

- Docker Desktop for macOS, Windows, Linux desktops

- Docker Hub image storage

- Image security scans

- Automated image builds

What is Docker Inc., the company?

Docker Inc was quicly formed after Docker the tool/project was created in 2013.

Previously, Docker Inc. focused on Dev and Ops tooling (2013-2019).

In 2019 they sold 2/3rd of company and Enterprise-focused software to Mirantis.

Now they are (finally) successful focusing on Dev tooling.

Docker Subscription includes:

- Docker Desktop for macOS, Windows, Linux desktops

- Docker Hub image storage

- Image security scans

- Automated image builds

We're only using Docker open source today!

Their Hub & Docker Desktop are totally free while learning and for personal use.

What is docker the tool?

"Installing Docker" really means "Installing the Docker Engine and CLI".

The Docker Engine is a daemon (a service running in the background).

What is docker the tool?

"Installing Docker" really means "Installing the Docker Engine and CLI".

The Docker Engine is a daemon (a service running in the background).

This daemon manages containers, the same way that a hypervisor manages VMs.

We interact with the Docker Engine by using the Docker CLI.

What is docker the tool?

"Installing Docker" really means "Installing the Docker Engine and CLI".

The Docker Engine is a daemon (a service running in the background).

This daemon manages containers, the same way that a hypervisor manages VMs.

We interact with the Docker Engine by using the Docker CLI.

The Docker CLI and the Docker Engine communicate through an API.

There are many other programs and client libraries which use that API.

Didn't Kubernetes replace Docker?

- This is a common misconception.

Didn't Kubernetes replace Docker?

This is a common misconception.

Docker only controls many containers on a single server.

Kubernetes (K8s) was invented to control Docker across many servers.

Didn't Kubernetes replace Docker?

This is a common misconception.

Docker only controls many containers on a single server.

Kubernetes (K8s) was invented to control Docker across many servers.

Kubernetes doesn't run containers itself, it only controls a runtime.

Didn't Kubernetes replace Docker?

This is a common misconception.

Docker only controls many containers on a single server.

Kubernetes (K8s) was invented to control Docker across many servers.

Kubernetes doesn't run containers itself, it only controls a runtime.

For years, Docker (

dockerd) was the most popular container runtime.Then Docker Inc. created

containerdas a lightweight runtime for servers.

Didn't Kubernetes replace Docker?

This is a common misconception.

Docker only controls many containers on a single server.

Kubernetes (K8s) was invented to control Docker across many servers.

Kubernetes doesn't run containers itself, it only controls a runtime.

For years, Docker (

dockerd) was the most popular container runtime.Then Docker Inc. created

containerdas a lightweight runtime for servers.Today

dockerdandcontainerdare most of runtime market. Others include CRI-O and Podman (Red Hat).

Didn't Kubernetes replace Docker?

This is a common misconception.

Docker only controls many containers on a single server.

Kubernetes (K8s) was invented to control Docker across many servers.

Kubernetes doesn't run containers itself, it only controls a runtime.

For years, Docker (

dockerd) was the most popular container runtime.Then Docker Inc. created

containerdas a lightweight runtime for servers.Today

dockerdandcontainerdare most of runtime market. Others include CRI-O and Podman (Red Hat).dockerdorpodman= best for humans locally.containerdorcri-o= best for K8s.

Did you know Docker has its own Orchestration?

Docker Swarm "mode" is still a thing

And might be having a renaissance

(I have a course on that too!) Todays_Agenda.md

Our training environment

(automatically generated title slide)

Our training environment

If you are attending a tutorial or workshop:

- a VM has been provisioned for each student

If you are doing or re-doing this course on your own, you can:

install Docker locally (as explained in the chapter "Installing Docker")

install Docker on e.g. a cloud VM

use https://www.play-with-docker.com/ to instantly get a training environment

containers/Training_Environment.md

Checking your Virtual Machine

Once logged in, make sure that you can run a basic Docker command:

$ docker versionClient: Docker Engine - Community Version: 20.10.17 API version: 1.41 Go version: go1.17.11 Git commit: 100c701 Built: Mon Jun 6 23:02:46 2022 OS/Arch: linux/amd64 Context: default Experimental: trueServer: Docker Engine - Community Engine: Version: 20.10.17 API version: 1.41 (minimum version 1.12) Go version: go1.17.11 Git commit: a89b842 Built: Mon Jun 6 23:00:51 2022 OS/Arch: linux/amd64 Experimental: false...If this doesn't work, raise your hand so that an instructor can assist you!

:EN:Container concepts :FR:Premier contact avec les conteneurs

:EN:- What's a container engine? :FR:- Qu'est-ce qu'un container engine ?

Our first containers

(automatically generated title slide)

Objectives

At the end of this lesson, you will have:

Seen Docker in action.

Started your first containers.

containers/First_Containers.md

Hello World

In your Docker environment, just run the following command:

$ docker run busybox echo hello worldhello world(If your Docker install is brand new, you will also see a few extra lines,

corresponding to the download of the busybox image.)

containers/First_Containers.md

That was our first container!

We used one of the smallest, simplest images available:

busybox.busyboxis typically used in embedded systems (phones, routers...)We ran a single process and echo'ed

hello world.

containers/First_Containers.md

A more useful container

Let's run a more exciting container:

$ docker run -it ubunturoot@04c0bb0a6c07:/#This is a brand new container.

It runs a bare-bones, no-frills

ubuntusystem.-itis shorthand for-i -t.-itells Docker to connect us to the container's stdin.-ttells Docker that we want a pseudo-terminal.

containers/First_Containers.md

Do something in our container

Try to run figlet in our container.

root@04c0bb0a6c07:/# figlet hellobash: figlet: command not foundAlright, we need to install it.

containers/First_Containers.md

Install a package in our container

We want figlet, so let's install it:

root@04c0bb0a6c07:/# apt-get update...Fetched 1514 kB in 14s (103 kB/s)Reading package lists... Doneroot@04c0bb0a6c07:/# apt-get install figletReading package lists... Done...One minute later, figlet is installed!

containers/First_Containers.md

Try to run our freshly installed program

The figlet program takes a message as parameter.

root@04c0bb0a6c07:/# figlet hello _ _ _ | |__ ___| | | ___ | '_ \ / _ \ | |/ _ \ | | | | __/ | | (_) ||_| |_|\___|_|_|\___/Beautiful! 😍

containers/First_Containers.md

Counting packages in the container

Let's check how many packages are installed there.

root@04c0bb0a6c07:/# dpkg -l | wc -l97dpkg -llists the packages installed in our containerwc -lcounts them

How many packages do we have on our host?

containers/First_Containers.md

Counting packages on the host

Exit the container by logging out of the shell, like you would usually do.

(E.g. with ^D or exit)

root@04c0bb0a6c07:/# exitNow, try to:

run

dpkg -l | wc -l. How many packages are installed?run

figlet. Does that work?

containers/First_Containers.md

Host and containers are independent things

We ran an

ubuntucontainer on an Linux/Windows/macOS host.They have different, independent packages.

Installing something on the host doesn't expose it to the container.

And vice-versa.

Even if both the host and the container have the same Linux distro!

We can run any container on any host.

(One exception: Windows containers can only run on Windows hosts; at least for now.)

containers/First_Containers.md

Where's our container?

Our container is now in a stopped state.

It still exists on disk, but all compute resources have been freed up.

We will see later how to get back to that container.

containers/First_Containers.md

Starting another container

What if we start a new container, and try to run figlet again?

$ docker run -it ubunturoot@b13c164401fb:/# figletbash: figlet: command not foundWe started a brand new container.

The basic Ubuntu image was used, and

figletis not here.

containers/First_Containers.md

Where's my container?

Can we reuse that container that we took time to customize?

We can, but that's not the default workflow with Docker.

What's the default workflow, then?

Always start with a fresh container.

If we need something installed in our container, build a custom image.That seems complicated!

We'll see that it's actually pretty easy!

And what's the point?

This puts a strong emphasis on automation and repeatability. Let's see why ...

containers/First_Containers.md

Pets vs. Cattle

In the "pets vs. cattle" metaphor, there are two kinds of servers.

Pets:

have distinctive names and unique configurations

when they have an outage, we do everything we can to fix them

Cattle:

have generic names (e.g. with numbers) and generic configuration

configuration is enforced by configuration management, golden images ...

when they have an outage, we can replace them immediately with a new server

What's the connection with Docker and containers?

containers/First_Containers.md

Local development environments

When we use local VMs (with e.g. VirtualBox or VMware), our workflow looks like this:

create VM from base template (Ubuntu, CentOS...)

install packages, set up environment

work on project

when done, shut down VM

next time we need to work on project, restart VM as we left it

if we need to tweak the environment, we do it live

Over time, the VM configuration evolves, diverges.

We don't have a clean, reliable, deterministic way to provision that environment.

containers/First_Containers.md

Local development with Docker

With Docker, the workflow looks like this:

create container image with our dev environment

run container with that image

work on project

when done, shut down container

next time we need to work on project, start a new container

if we need to tweak the environment, we create a new image

We have a clear definition of our environment, and can share it reliably with others.

Let's see in the next chapters how to bake a custom image with

figlet!

:EN:- Running our first container :FR:- Lancer nos premiers conteneurs

Background containers

(automatically generated title slide)

Objectives

Our first containers were interactive.

We will now see how to:

- Run a non-interactive container.

- Run a container in the background.

- List running containers.

- Check the logs of a container.

- Stop a container.

- List stopped containers.

containers/Background_Containers.md

A non-interactive container

We will run a small custom container.

This container just displays the time every second.

$ docker run jpetazzo/clockFri Feb 20 00:28:53 UTC 2015Fri Feb 20 00:28:54 UTC 2015Fri Feb 20 00:28:55 UTC 2015...- This container will run forever.

- To stop it, press

^C. - Docker has automatically downloaded the image

jpetazzo/clock. - This image is a user image, created by

jpetazzo. - We will hear more about user images (and other types of images) later.

containers/Background_Containers.md

When ^C doesn't work...

Sometimes, ^C won't be enough.

Why? And how can we stop the container in that case?

containers/Background_Containers.md

What happens when we hit ^C

SIGINT gets sent to the container, which means:

SIGINTgets sent to PID 1 (default case)SIGINTgets sent to foreground processes when running with-ti

But there is a special case for PID 1: it ignores all signals!

except

SIGKILLandSIGSTOPexcept signals handled explicitly

TL,DR: there are many circumstances when ^C won't stop the container.

containers/Background_Containers.md

Why is PID 1 special?

PID 1 has some extra responsibilities:

it starts (directly or indirectly) every other process

when a process exits, its processes are "reparented" under PID 1

When PID 1 exits, everything stops:

on a "regular" machine, it causes a kernel panic

in a container, it kills all the processes

We don't want PID 1 to stop accidentally

That's why it has these extra protections

containers/Background_Containers.md

How to stop these containers, then?

Start another terminal and forget about them

(for now!)

We'll shortly learn about

docker kill

containers/Background_Containers.md

Run a container in the background

Containers can be started in the background, with the -d flag (daemon mode):

$ docker run -d jpetazzo/clock47d677dcfba4277c6cc68fcaa51f932b544cab1a187c853b7d0caf4e8debe5ad- We don't see the output of the container.

- But don't worry: Docker collects that output and logs it!

- Docker gives us the ID of the container.

containers/Background_Containers.md

List running containers

How can we check that our container is still running?

With docker ps, just like the UNIX ps command, lists running processes.

$ docker psCONTAINER ID IMAGE ... CREATED STATUS ...47d677dcfba4 jpetazzo/clock ... 2 minutes ago Up 2 minutes ...Docker tells us:

- The (truncated) ID of our container.

- The image used to start the container.

- That our container has been running (

Up) for a couple of minutes. - Other information (COMMAND, PORTS, NAMES) that we will explain later.

containers/Background_Containers.md

Starting more containers

Let's start two more containers.

$ docker run -d jpetazzo/clock57ad9bdfc06bb4407c47220cf59ce21585dce9a1298d7a67488359aeaea8ae2a$ docker run -d jpetazzo/clock068cc994ffd0190bbe025ba74e4c0771a5d8f14734af772ddee8dc1aaf20567dCheck that docker ps correctly reports all 3 containers.

containers/Background_Containers.md

Viewing only the last container started

When many containers are already running, it can be useful to see only the last container that was started.

This can be achieved with the -l ("Last") flag:

$ docker ps -lCONTAINER ID IMAGE ... CREATED STATUS ...068cc994ffd0 jpetazzo/clock ... 2 minutes ago Up 2 minutes ...containers/Background_Containers.md

View only the IDs of the containers

Many Docker commands will work on container IDs: docker stop, docker rm...

If we want to list only the IDs of our containers (without the other columns

or the header line),

we can use the -q ("Quiet", "Quick") flag:

$ docker ps -q068cc994ffd057ad9bdfc06b47d677dcfba4containers/Background_Containers.md

Combining flags

We can combine -l and -q to see only the ID of the last container started:

$ docker ps -lq068cc994ffd0At a first glance, it looks like this would be particularly useful in scripts.

However, if we want to start a container and get its ID in a reliable way,

it is better to use docker run -d, which we will cover in a bit.

(Using docker ps -lq is prone to race conditions: what happens if someone

else, or another program or script, starts another container just before

we run docker ps -lq?)

containers/Background_Containers.md

View the logs of a container

We told you that Docker was logging the container output.

Let's see that now.

$ docker logs 068Fri Feb 20 00:39:52 UTC 2015Fri Feb 20 00:39:53 UTC 2015...- We specified a prefix of the full container ID.

- You can, of course, specify the full ID.

- The

logscommand will output the entire logs of the container.

(Sometimes, that will be too much. Let's see how to address that.)

containers/Background_Containers.md

View only the tail of the logs

To avoid being spammed with eleventy pages of output,

we can use the --tail option:

$ docker logs --tail 3 068Fri Feb 20 00:55:35 UTC 2015Fri Feb 20 00:55:36 UTC 2015Fri Feb 20 00:55:37 UTC 2015- The parameter is the number of lines that we want to see.

containers/Background_Containers.md

Follow the logs in real time

Just like with the standard UNIX command tail -f, we can

follow the logs of our container:

$ docker logs --tail 1 --follow 068Fri Feb 20 00:57:12 UTC 2015Fri Feb 20 00:57:13 UTC 2015^C- This will display the last line in the log file.

- Then, it will continue to display the logs in real time.

- Use

^Cto exit.

containers/Background_Containers.md

Stop our container

There are two ways we can terminate our detached container.

- Killing it using the

docker killcommand. - Stopping it using the

docker stopcommand.

The first one stops the container immediately, by using the

KILL signal.

The second one is more graceful. It sends a TERM signal,

and after 10 seconds, if the container has not stopped, it

sends KILL.

Reminder: the KILL signal cannot be intercepted, and will

forcibly terminate the container.

containers/Background_Containers.md

Stopping our containers

Let's stop one of those containers:

$ docker stop 47d647d6This will take 10 seconds:

- Docker sends the TERM signal;

- the container doesn't react to this signal (it's a simple Shell script with no special signal handling);

- 10 seconds later, since the container is still running, Docker sends the KILL signal;

- this terminates the container.

containers/Background_Containers.md

Killing the remaining containers

Let's be less patient with the two other containers:

$ docker kill 068 57ad06857adThe stop and kill commands can take multiple container IDs.

Those containers will be terminated immediately (without the 10-second delay).

Let's check that our containers don't show up anymore:

$ docker pscontainers/Background_Containers.md

List stopped containers

We can also see stopped containers, with the -a (--all) option.

$ docker ps -aCONTAINER ID IMAGE ... CREATED STATUS068cc994ffd0 jpetazzo/clock ... 21 min. ago Exited (137) 3 min. ago57ad9bdfc06b jpetazzo/clock ... 21 min. ago Exited (137) 3 min. ago47d677dcfba4 jpetazzo/clock ... 23 min. ago Exited (137) 3 min. ago5c1dfd4d81f1 jpetazzo/clock ... 40 min. ago Exited (0) 40 min. agob13c164401fb ubuntu ... 55 min. ago Exited (130) 53 min. ago:EN:- Foreground and background containers :FR:- Exécution interactive ou en arrière-plan

Understanding Docker images

(automatically generated title slide)

Objectives

In this section, we will explain:

What is an image.

What is a layer.

The various image namespaces.

How to search and download images.

Image tags and when to use them.

What is an image?

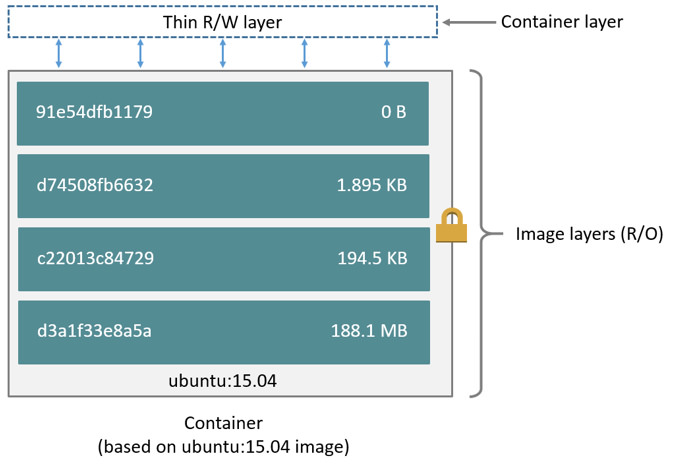

Image = files + metadata

These files form the root filesystem of our container.

The metadata can indicate a number of things, e.g.:

- the author of the image

- the command to execute in the container when starting it

- environment variables to be set

- etc.

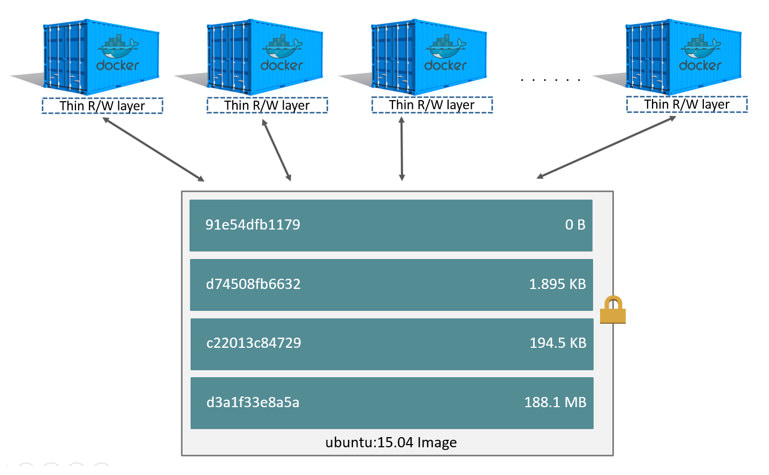

Images are made of layers, conceptually stacked on top of each other.

Each layer can add, change, and remove files and/or metadata.

Images can share layers to optimize disk usage, transfer times, and memory use.

Example for a Java webapp

Each of the following items will correspond to one layer:

- CentOS base layer

- Packages and configuration files added by our local IT

- JRE

- Tomcat

- Our application's dependencies

- Our application code and assets

- Our application configuration

(Note: app config is generally added by orchestration facilities.)

Differences between containers and images

An image is a read-only filesystem.

A container is an encapsulated set of processes,

running in a read-write copy of that filesystem.

To optimize container boot time, copy-on-write is used instead of regular copy.

docker runstarts a container from a given image.

Wait a minute...

If an image is read-only, how do we change it?

We don't.

We create a new container from that image.

Then we make changes to that container.

When we are satisfied with those changes, we transform them into a new layer.

A new image is created by stacking the new layer on top of the old image.

A chicken-and-egg problem

The only way to create an image is by "freezing" a container.

The only way to create a container is by instantiating an image.

Help!

Creating the first images

There is a special empty image called scratch.

- It allows to build from scratch.

The docker import command loads a tarball into Docker.

- The imported tarball becomes a standalone image.

- That new image has a single layer.

Note: you will probably never have to do this yourself.

Creating other images

docker commit

- Saves all the changes made to a container into a new layer.

- Creates a new image (effectively a copy of the container).

docker build (used 99% of the time)

- Performs a repeatable build sequence.

- This is the preferred method!

We will explain both methods in a moment.

Images namespaces

There are three namespaces:

Official images

e.g.

ubuntu,busybox...User (and organizations) images

e.g.

bretfisher/clockSelf-hosted images

e.g.

registry.example.com:5000/my-private/image

Let's explain each of them.

Root namespace

The root namespace is for official images.

They are gated by Docker Inc.

They are generally authored and maintained by third parties.

Those images include:

Small, "swiss-army-knife" images like busybox.

Distro images to be used as bases for your builds, like ubuntu, fedora...

Ready-to-use components and services, like redis, postgresql...

Over 150 at this point!

User namespace

The user namespace holds images for Docker Hub users and organizations.

For example:

bretfisher/clockThe Docker Hub user is:

bretfisherThe image name is:

clockSelf-hosted namespace

This namespace holds images which are not hosted on Docker Hub, but on third party registries.

They contain the hostname (or IP address), and optionally the port, of the registry server.

For example:

localhost:5000/wordpresslocalhost:5000is the host and port of the registrywordpressis the name of the image

Other examples:

quay.io/coreos/etcdgcr.io/google-containers/hugoHow do you store and manage images?

Images can be stored:

- On your Docker host.

- In a Docker registry.

You can use the Docker client to download (pull) or upload (push) images.

To be more accurate: you can use the Docker client to tell a Docker Engine to push and pull images to and from a registry.

Showing current images

Let's look at what images are on our host now.

$ docker imagesREPOSITORY TAG IMAGE ID CREATED SIZEfedora latest ddd5c9c1d0f2 3 days ago 204.7 MBcentos latest d0e7f81ca65c 3 days ago 196.6 MBubuntu latest 07c86167cdc4 4 days ago 188 MBredis latest 4f5f397d4b7c 5 days ago 177.6 MBpostgres latest afe2b5e1859b 5 days ago 264.5 MBalpine latest 70c557e50ed6 5 days ago 4.798 MBdebian latest f50f9524513f 6 days ago 125.1 MBbusybox latest 3240943c9ea3 2 weeks ago 1.114 MBtraining/namer latest 902673acc741 9 months ago 289.3 MBjpetazzo/clock latest 12068b93616f 12 months ago 2.433 MBDownloading images

There are two ways to download images.

Explicitly, with

docker pull.Implicitly, when executing

docker runand the image is not found locally.

Pulling an image

$ docker pull debian:jessiePulling repository debianb164861940b8: Download completeb164861940b8: Pulling image (jessie) from debiand1881793a057: Download completeAs seen previously, images are made up of layers.

Docker has downloaded all the necessary layers.

In this example,

:jessieindicates which exact version of Debian we would like.It is a version tag.

Image and tags

Images can have tags.

Tags define image versions or variants.

docker pull ubuntuwill refer toubuntu:latest.The

:latesttag is generally updated often.

When to (not) use tags

Don't specify tags:

- When doing rapid testing and prototyping.

- When experimenting.

- When you want the latest version.

Do specify tags:

- When recording a procedure into a script.

- When going to production.

- To ensure that the same version will be used everywhere.

- To ensure repeatability later.

This is similar to what we would do with pip install, npm install, etc.

Multi-arch images

An image can support multiple architectures

More precisely, a specific tag in a given repository can have either:

a single manifest referencing an image for a single architecture

a manifest list (or fat manifest) referencing multiple images

In a manifest list, each image is identified by a combination of:

os(linux, windows)architecture(amd64, arm, arm64...)optional fields like

variant(for arm and arm64),os.version(for windows)

Working with multi-arch images

The Docker Engine will pull "native" images when available

(images matching its own os/architecture/variant)

We can ask for a specific image platform with

--platformThe Docker Engine can run non-native images thanks to QEMU+binfmt

(automatically on Docker Desktop; with a bit of setup on Linux)

Section summary

We've learned how to:

- Understand images and layers.

- Understand Docker image namespacing.

- Search and download images.

:EN:Building images :EN:- Containers, images, and layers :EN:- Image addresses and tags :EN:- Finding and transferring images

:FR:Construire des images :FR:- La différence entre un conteneur et une image :FR:- La notion de layer partagé entre images

Building Docker images with a Dockerfile

(automatically generated title slide)

Objectives

We will build a container image automatically, with a Dockerfile.

At the end of this lesson, you will be able to:

Write a

Dockerfile.Build an image from a

Dockerfile.

containers/Building_Images_With_Dockerfiles.md

Dockerfile overview

A

Dockerfileis a build recipe for a Docker image.It contains a series of instructions telling Docker how an image is constructed.

The

docker buildcommand builds an image from aDockerfile.

containers/Building_Images_With_Dockerfiles.md

Writing our first Dockerfile

Our Dockerfile must be in a new, empty directory.

- Create a directory to hold our

Dockerfile.

$ mkdir myimage- Create a

Dockerfileinside this directory.

$ cd myimage$ vim DockerfileOf course, you can use any other editor of your choice.

containers/Building_Images_With_Dockerfiles.md

Type this into our Dockerfile...

FROM ubuntuRUN apt-get updateRUN apt-get install figletFROMindicates the base image for our build.Each

RUNline will be executed by Docker during the build.Our

RUNcommands must be non-interactive.

(No input can be provided to Docker during the build.)In many cases, we will add the

-yflag toapt-get.

containers/Building_Images_With_Dockerfiles.md

Build it!

Save our file, then execute:

$ docker build -t figlet .-tindicates the tag to apply to the image..indicates the location of the build context.

We will talk more about the build context later.

To keep things simple for now: this is the directory where our Dockerfile is located.

containers/Building_Images_With_Dockerfiles.md

What happens when we build the image?

It depends if we're using BuildKit or not!

If there are lots of blue lines and the first line looks like this:

[+] Building 1.8s (4/6)... then we're using BuildKit.

If the output is mostly black-and-white and the first line looks like this:

Sending build context to Docker daemon 2.048kB... then we're using the "classic" or "old-style" builder.

containers/Building_Images_With_Dockerfiles.md

To BuildKit or Not To BuildKit

Classic builder:

copies the whole "build context" to the Docker Engine

linear (processes lines one after the other)

requires a full Docker Engine

BuildKit:

only transfers parts of the "build context" when needed

will parallelize operations (when possible)

can run in non-privileged containers (e.g. on Kubernetes)

containers/Building_Images_With_Dockerfiles.md

With the classic builder

The output of docker build looks like this:

docker build -t figlet .Sending build context to Docker daemon 2.048kBStep 1/3 : FROM ubuntu ---> f975c5035748Step 2/3 : RUN apt-get update ---> Running in e01b294dbffd(...output of the RUN command...)Removing intermediate container e01b294dbffd ---> eb8d9b561b37Step 3/3 : RUN apt-get install figlet ---> Running in c29230d70f9b(...output of the RUN command...)Removing intermediate container c29230d70f9b ---> 0dfd7a253f21Successfully built 0dfd7a253f21Successfully tagged figlet:latest- The output of the

RUNcommands has been omitted. - Let's explain what this output means.

containers/Building_Images_With_Dockerfiles.md

Sending the build context to Docker

Sending build context to Docker daemon 2.048 kBThe build context is the

.directory given todocker build.It is sent (as an archive) by the Docker client to the Docker daemon.

This allows to use a remote machine to build using local files.

Be careful (or patient) if that directory is big and your link is slow.

You can speed up the process with a

.dockerignorefileIt tells docker to ignore specific files in the directory

Only ignore files that you won't need in the build context!

containers/Building_Images_With_Dockerfiles.md

Executing each step

Step 2/3 : RUN apt-get update ---> Running in e01b294dbffd(...output of the RUN command...)Removing intermediate container e01b294dbffd ---> eb8d9b561b37A container (

e01b294dbffd) is created from the base image.The

RUNcommand is executed in this container.The container is committed into an image (

eb8d9b561b37).The build container (

e01b294dbffd) is removed.The output of this step will be the base image for the next one.

containers/Building_Images_With_Dockerfiles.md

With BuildKit

[+] Building 7.9s (7/7) FINISHED => [internal] load build definition from Dockerfile 0.0s => => transferring dockerfile: 98B 0.0s => [internal] load .dockerignore 0.0s => => transferring context: 2B 0.0s => [internal] load metadata for docker.io/library/ubuntu:latest 1.2s => [1/3] FROM docker.io/library/ubuntu@sha256:cf31af331f38d1d7158470e095b132acd126a7180a54f263d386 3.2s => => resolve docker.io/library/ubuntu@sha256:cf31af331f38d1d7158470e095b132acd126a7180a54f263d386 0.0s => => sha256:cf31af331f38d1d7158470e095b132acd126a7180a54f263d386da88eb681d93 1.20kB / 1.20kB 0.0s => => sha256:1de4c5e2d8954bf5fa9855f8b4c9d3c3b97d1d380efe19f60f3e4107a66f5cae 943B / 943B 0.0s => => sha256:6a98cbe39225dadebcaa04e21dbe5900ad604739b07a9fa351dd10a6ebad4c1b 3.31kB / 3.31kB 0.0s => => sha256:80bc30679ac1fd798f3241208c14accd6a364cb8a6224d1127dfb1577d10554f 27.14MB / 27.14MB 2.3s => => sha256:9bf18fab4cfbf479fa9f8409ad47e2702c63241304c2cdd4c33f2a1633c5f85e 850B / 850B 0.5s => => sha256:5979309c983a2adeff352538937475cf961d49c34194fa2aab142effe19ed9c1 189B / 189B 0.4s => => extracting sha256:80bc30679ac1fd798f3241208c14accd6a364cb8a6224d1127dfb1577d10554f 0.7s => => extracting sha256:9bf18fab4cfbf479fa9f8409ad47e2702c63241304c2cdd4c33f2a1633c5f85e 0.0s => => extracting sha256:5979309c983a2adeff352538937475cf961d49c34194fa2aab142effe19ed9c1 0.0s => [2/3] RUN apt-get update 2.5s => [3/3] RUN apt-get install figlet 0.9s => exporting to image 0.1s => => exporting layers 0.1s => => writing image sha256:3b8aee7b444ab775975dfba691a72d8ac24af2756e0a024e056e3858d5a23f7c 0.0s => => naming to docker.io/library/figlet 0.0scontainers/Building_Images_With_Dockerfiles.md

Understanding BuildKit output

BuildKit transfers the Dockerfile and the build context

(these are the first two

[internal]stages)Then it executes the steps defined in the Dockerfile

(

[1/3],[2/3],[3/3])Finally, it exports the result of the build

(image definition + collection of layers)

containers/Building_Images_With_Dockerfiles.md

BuildKit plain output

When running BuildKit in e.g. a CI pipeline, its output will be different

We can see the same output format by using

--progress=plain

containers/Building_Images_With_Dockerfiles.md

The caching system

If you run the same build again, it will be instantaneous. Why?

After each build step, Docker takes a snapshot of the resulting image.

Before executing a step, Docker checks if it has already built the same sequence.

Docker uses the exact strings defined in your Dockerfile, so:

RUN apt-get install figlet cowsay

is different from

RUN apt-get install cowsay figletRUN apt-get updateis not re-executed when the mirrors are updated

You can force a rebuild with docker build --no-cache ....

containers/Building_Images_With_Dockerfiles.md

Running the image

The resulting image is not different from the one produced manually.

$ docker run -ti figletroot@91f3c974c9a1:/# figlet hello _ _ _ | |__ ___| | | ___ | '_ \ / _ \ | |/ _ \ | | | | __/ | | (_) ||_| |_|\___|_|_|\___/Yay! 🎉

containers/Building_Images_With_Dockerfiles.md

Using image and viewing history

The history command lists all the layers composing an image.

For each layer, it shows its creation time, size, and creation command.

When an image was built with a Dockerfile, each layer corresponds to a line of the Dockerfile.

$ docker history figletIMAGE CREATED CREATED BY SIZEf9e8f1642759 About an hour ago /bin/sh -c apt-get install fi 1.627 MB7257c37726a1 About an hour ago /bin/sh -c apt-get update 21.58 MB07c86167cdc4 4 days ago /bin/sh -c #(nop) CMD ["/bin 0 B<missing> 4 days ago /bin/sh -c sed -i 's/^#\s*\( 1.895 kB<missing> 4 days ago /bin/sh -c echo '#!/bin/sh' 194.5 kB<missing> 4 days ago /bin/sh -c #(nop) ADD file:b 187.8 MBcontainers/Building_Images_With_Dockerfiles.md

Why sh -c?

On UNIX, to start a new program, we need two system calls:

fork(), to create a new child process;execve(), to replace the new child process with the program to run.

Conceptually,

execve()works like this:execve(program, [list, of, arguments])When we run a command, e.g.

ls -l /tmp, something needs to parse the command.(i.e. split the program and its arguments into a list.)

The shell is usually doing that.

(It also takes care of expanding environment variables and special things like

~.)

containers/Building_Images_With_Dockerfiles.md

Why sh -c?

When we do

RUN ls -l /tmp, the Docker builder needs to parse the command.Instead of implementing its own parser, it outsources the job to the shell.

That's why we see

sh -c ls -l /tmpin that case.But we can also do the parsing jobs ourselves.

This means passing

RUNa list of arguments.This is called the exec syntax.

containers/Building_Images_With_Dockerfiles.md

Shell syntax vs exec syntax

Dockerfile commands that execute something can have two forms:

plain string, or shell syntax:

RUN apt-get install figletJSON list, or exec syntax:

RUN ["apt-get", "install", "figlet"]

We are going to change our Dockerfile to see how it affects the resulting image.

containers/Building_Images_With_Dockerfiles.md

Using exec syntax in our Dockerfile

Let's change our Dockerfile as follows!

FROM ubuntuRUN apt-get updateRUN ["apt-get", "install", "figlet"]Then build the new Dockerfile.

$ docker build -t figlet .containers/Building_Images_With_Dockerfiles.md

History with exec syntax

Compare the new history:

$ docker history figletIMAGE CREATED CREATED BY SIZE27954bb5faaf 10 seconds ago apt-get install figlet 1.627 MB7257c37726a1 About an hour ago /bin/sh -c apt-get update 21.58 MB07c86167cdc4 4 days ago /bin/sh -c #(nop) CMD ["/bin 0 B<missing> 4 days ago /bin/sh -c sed -i 's/^#\s*\( 1.895 kB<missing> 4 days ago /bin/sh -c echo '#!/bin/sh' 194.5 kB<missing> 4 days ago /bin/sh -c #(nop) ADD file:b 187.8 MBExec syntax specifies an exact command to execute.

Shell syntax specifies a command to be wrapped within

/bin/sh -c "...".

containers/Building_Images_With_Dockerfiles.md

When to use exec syntax and shell syntax

shell syntax:

- is easier to write

- interpolates environment variables and other shell expressions

- creates an extra process (

/bin/sh -c ...) to parse the string - requires

/bin/shto exist in the container

exec syntax:

- is harder to write (and read!)

- passes all arguments without extra processing

- doesn't create an extra process

- doesn't require

/bin/shto exist in the container - Typically only used for CMD at end of Dockerfile

containers/Building_Images_With_Dockerfiles.md

CMD and ENTRYPOINT

(automatically generated title slide)

Objectives

In this lesson, we will learn about two important Dockerfile commands:

CMD and ENTRYPOINT.

These commands allow us to set the default command to run in a container.

containers/Cmd_And_Entrypoint.md

Defining a default command

When people run our container, we want to greet them with a nice hello message, and using a custom font.

For that, we will execute:

figlet -f script hello-f scripttells figlet to use a fancy font.hellois the message that we want it to display.

containers/Cmd_And_Entrypoint.md

Adding CMD to our Dockerfile

Our new Dockerfile will look like this:

FROM ubuntuRUN apt-get updateRUN ["apt-get", "install", "figlet"]CMD figlet -f script helloCMDdefines a default command to run when none is given.It can appear at any point in the file.

Each

CMDwill replace and override the previous one.As a result, while you can have multiple

CMDlines, it is useless.

containers/Cmd_And_Entrypoint.md

Build and test our image

Let's build it:

$ docker build -t figlet ....Successfully built 042dff3b4a8dSuccessfully tagged figlet:latestAnd run it:

$ docker run figlet _ _ _ | | | | | | | | _ | | | | __ |/ \ |/ |/ |/ / \_| |_/|__/|__/|__/\__/containers/Cmd_And_Entrypoint.md

Overriding CMD

If we want to get a shell into our container (instead of running

figlet), we just have to specify a different program to run:

$ docker run -it figlet bashroot@7ac86a641116:/#We specified

bash.It replaced the value of

CMD.

containers/Cmd_And_Entrypoint.md

Using ENTRYPOINT

We want to be able to specify a different message on the command line,

while retaining figlet and some default parameters.

In other words, we would like to be able to do this:

$ docker run figlet salut _ | | , __, | | _|_ / \_/ | |/ | | | \/ \_/|_/|__/ \_/|_/|_/We will use the ENTRYPOINT verb in Dockerfile.

containers/Cmd_And_Entrypoint.md

Adding ENTRYPOINT to our Dockerfile

Our new Dockerfile will look like this:

FROM ubuntuRUN apt-get updateRUN ["apt-get", "install", "figlet"]ENTRYPOINT ["figlet", "-f", "script"]ENTRYPOINTdefines a base command (and its parameters) for the container.The command line arguments are appended to those parameters.

Like

CMD,ENTRYPOINTcan appear anywhere, and replaces the previous value.

Why did we use JSON syntax for our ENTRYPOINT?

containers/Cmd_And_Entrypoint.md

Implications of JSON vs string syntax

When CMD or ENTRYPOINT use string syntax, they get wrapped in

sh -c.To avoid this wrapping, we can use JSON syntax.

What if we used ENTRYPOINT with string syntax?

$ docker run figlet salutThis would run the following command in the figlet image:

sh -c "figlet -f script" salutcontainers/Cmd_And_Entrypoint.md

Build and test our image

Let's build it:

$ docker build -t figlet ....Successfully built 36f588918d73Successfully tagged figlet:latestAnd run it:

$ docker run figlet salut _ | | , __, | | _|_ / \_/ | |/ | | | \/ \_/|_/|__/ \_/|_/|_/containers/Cmd_And_Entrypoint.md

Using CMD and ENTRYPOINT together

What if we want to define a default message for our container?

Then we will use ENTRYPOINT and CMD together.

ENTRYPOINTwill define the base command for our container.CMDwill define the default parameter(s) for this command.They both have to use JSON syntax.

containers/Cmd_And_Entrypoint.md

CMD and ENTRYPOINT together

Our new Dockerfile will look like this:

FROM ubuntuRUN apt-get updateRUN ["apt-get", "install", "figlet"]ENTRYPOINT ["figlet", "-f", "script"]CMD ["hello world"]ENTRYPOINTdefines a base command (and its parameters) for the container.If we don't specify extra command-line arguments when starting the container, the value of

CMDis appended.Otherwise, our extra command-line arguments are used instead of

CMD.

containers/Cmd_And_Entrypoint.md

Build and test our image

Let's build it:

$ docker build -t myfiglet ....Successfully built 6e0b6a048a07Successfully tagged myfiglet:latestRun it without parameters:

$ docker run myfiglet _ _ _ _ | | | | | | | | | | | _ | | | | __ __ ,_ | | __| |/ \ |/ |/ |/ / \_ | | |_/ \_/ | |/ / | | |_/|__/|__/|__/\__/ \/ \/ \__/ |_/|__/\_/|_/containers/Cmd_And_Entrypoint.md

Overriding the image default parameters

Now let's pass extra arguments to the image.

$ docker run myfiglet hola mundo _ _ | | | | | | | __ | | __, _ _ _ _ _ __| __ |/ \ / \_|/ / | / |/ |/ | | | / |/ | / | / \_| |_/\__/ |__/\_/|_/ | | |_/ \_/|_/ | |_/\_/|_/\__/We overrode CMD but still used ENTRYPOINT.

containers/Cmd_And_Entrypoint.md

Overriding ENTRYPOINT

What if we want to run a shell in our container?

We cannot just do docker run myfiglet bash because

that would just tell figlet to display the word "bash."

We use the --entrypoint parameter:

$ docker run -it --entrypoint bash myfigletroot@6027e44e2955:/#containers/Cmd_And_Entrypoint.md

CMD and ENTRYPOINT recap

docker run myimageexecutesENTRYPOINT+CMDdocker run myimage argsexecutesENTRYPOINT+args(overridingCMD)docker run --entrypoint prog myimageexecutesprog(overriding both)

| Command | ENTRYPOINT |

CMD |

Result |

|---|---|---|---|

docker run figlet |

none | none | Use values from base image (bash) |

docker run figlet hola |

none | none | Error (executable hola not found) |

docker run figlet |

figlet -f script |

none | figlet -f script |

docker run figlet hola |

figlet -f script |

none | figlet -f script hola |

docker run figlet |

none | figlet -f script |

figlet -f script |

docker run figlet hola |

none | figlet -f script |

Error (executable hola not found) |

docker run figlet |

figlet -f script |

hello |

figlet -f script hello |

docker run figlet hola |

figlet -f script |

hello |

figlet -f script hola |

containers/Cmd_And_Entrypoint.md

When to use ENTRYPOINT vs CMD

ENTRYPOINT is great for "containerized binaries".

Example: docker run consul --help

(Pretend that the docker run part isn't there!)

CMD is great for images with multiple binaries.

Example: docker run busybox ifconfig

(It makes sense to indicate which program we want to run!)

:EN:- CMD and ENTRYPOINT :FR:- CMD et ENTRYPOINT

Copying files during the build

(automatically generated title slide)

Objectives

So far, we have installed things in our container images by downloading packages.

We can also copy files from the build context to the container that we are building.

Remember: the build context is the directory containing the Dockerfile.

In this chapter, we will learn a new Dockerfile keyword: COPY.

containers/Copying_Files_During_Build.md

Build some C code

We want to build a container that compiles a basic "Hello world" program in C.

Here is the program, hello.c:

int main () { puts("Hello, world!"); return 0;}Let's create a new directory, and put this file in there.

Then we will write the Dockerfile.

containers/Copying_Files_During_Build.md

The Dockerfile

On Debian and Ubuntu, the package build-essential will get us a compiler.

When installing it, don't forget to specify the -y flag, otherwise the build will fail (since the build cannot be interactive).

Then we will use COPY to place the source file into the container.

FROM ubuntuRUN apt-get updateRUN apt-get install -y build-essentialCOPY hello.c /RUN make helloCMD /helloCreate this Dockerfile.

containers/Copying_Files_During_Build.md

Testing our C program

Create

hello.candDockerfilein the same directory.Run

docker build -t hello .in this directory.Run

docker run hello, you should seeHello, world!.

Success!

containers/Copying_Files_During_Build.md

COPY and the build cache

Run the build again.

Now, modify

hello.cand run the build again.Docker can cache steps involving

COPY.Those steps will not be executed again if the files haven't been changed.

containers/Copying_Files_During_Build.md

Details

We can

COPYwhole directories recursivelyIt is possible to do e.g.

COPY . .(but it might require some extra precautions to avoid copying too much)

In older Dockerfiles, you might see the

ADDcommand; consider it deprecated(it is similar to

COPYbut can automatically extract archives)If we really wanted to compile C code in a container, we would:

place it in a different directory, with the

WORKDIRinstructioneven better, use the

gccofficial image

containers/Copying_Files_During_Build.md

.dockerignore

We can create a file named

.dockerignore(at the top-level of the build context)

It can contain file names and globs to ignore

They won't be sent to the builder

(and won't end up in the resulting image)

See the documentation for the little details

(exceptions can be made with

!, multiple directory levels with**...)

:EN:- Leveraging the build cache for faster builds :FR:- Tirer parti du cache afin d'optimiser la vitesse de build

Exercise — writing Dockerfiles

(automatically generated title slide)

Exercise — writing Dockerfiles

Let's write Dockerfiles for an existing application!

Check out the code repository

Read all the instructions

Write Dockerfiles

Build and test them individually

containers/Exercise_Dockerfile_Basic.md

Code repository

Clone the repository available at:

https://github.com/jpetazzo/wordsmith

It should look like this:

├── LICENSE├── README├── db/│ └── words.sql├── web/│ ├── dispatcher.go│ └── static/└── words/ ├── pom.xml └── src/containers/Exercise_Dockerfile_Basic.md

Instructions

The repository contains instructions in English and French.

For now, we only care about the first part (about writing Dockerfiles).

Place each Dockerfile in its own directory, like this:

├── LICENSE├── README├── db/│ ├── Dockerfile│ └── words.sql├── web/│ ├── Dockerfile│ ├── dispatcher.go│ └── static/└── words/ ├── Dockerfile ├── pom.xml └── src/containers/Exercise_Dockerfile_Basic.md

Build and test

Build and run each Dockerfile individually.

For db, we should be able to see some messages confirming that the data set

was loaded successfully (some INSERT lines in the container output).

For web and words, we should be able to see some message looking like

"server started successfully".

That's all we care about for now!

Bonus question: make sure that each container stops correctly when hitting Ctrl-C.

Test with a Compose file

Place the following Compose file at the root of the repository:

version: "3"services: db: build: db words: build: words web: build: web ports: - 8888:80Test the whole app by bringin up the stack and connecting to port 8888.

Done for today. On Friday:

Docker Networking, Docker Compose, & Workflows local Dev & Test

Remember to signup for the Udemy courses (info on front page)

Do the example Dockerfile!

Dig into Bonus Sections

Container networking basics

(automatically generated title slide)

Objectives

We will now run network services (accepting requests) in containers.

At the end of this section, you will be able to:

Run a network service in a container.

Connect to that network service.

Find a container's IP address.

containers/Container_Networking_Basics.md

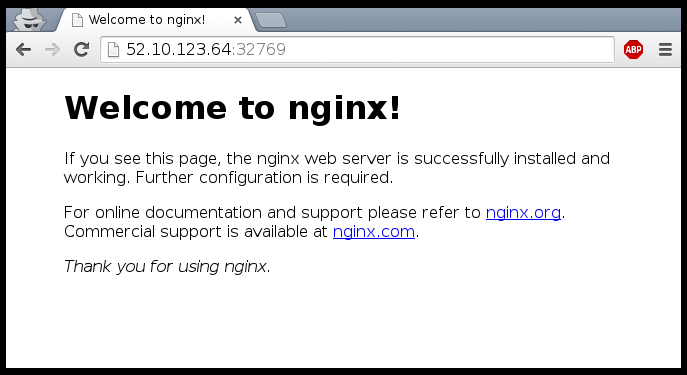

Running a very simple service

We need something small, simple, easy to configure

(or, even better, that doesn't require any configuration at all)

Let's use the official NGINX image (named

nginx)It runs a static web server listening on port 80

It serves a default "Welcome to nginx!" page

containers/Container_Networking_Basics.md

Running an NGINX server

$ docker run -d -P nginx66b1ce719198711292c8f34f84a7b68c3876cf9f67015e752b94e189d35a204eDocker will automatically pull the

nginximage from the Docker Hub-d/--detachtells Docker to run it in the backgroundP/--publish-alltells Docker to publish all ports(publish = make them reachable from other computers)

...OK, how do we connect to our web server now?

containers/Container_Networking_Basics.md

Finding our web server port

First, we need to find the port number used by Docker

(the NGINX container listens on port 80, but this port will be mapped)

We can use

docker ps:$ docker psCONTAINER ID IMAGE ... PORTS ...e40ffb406c9e nginx ... 0.0.0.0:12345->80/tcp ...This means:

port 12345 on the Docker host is mapped to port 80 in the container

Now we need to connect to the Docker host!

containers/Container_Networking_Basics.md

Finding the address of the Docker host

When running Docker on your Linux workstation:

use

localhost, or any IP address of your machineWhen running Docker on a remote Linux server:

use any IP address of the remote machine

When running Docker Desktop on Mac or Windows:

use

localhostIn other scenarios (

docker-machine, local VM...):use the IP address of the Docker VM

containers/Container_Networking_Basics.md

Connecting to our web server (GUI)

Point your browser to the IP address of your Docker host, on the port

shown by docker ps for container port 80.

containers/Container_Networking_Basics.md

Connecting to our web server (CLI)

You can also use curl directly from the Docker host.

Make sure to use the right port number if it is different from the example below:

$ curl localhost:12345<!DOCTYPE html><html><head><title>Welcome to nginx!</title>...containers/Container_Networking_Basics.md

How does Docker know which port to map?

There is metadata in the image telling "this image has something on port 80".

We can see that metadata with

docker inspect:

$ docker inspect --format '{{.Config.ExposedPorts}}' nginxmap[80/tcp:{}]This metadata was set in the Dockerfile, with the

EXPOSEkeyword.We can see that with

docker history:

$ docker history nginxIMAGE CREATED CREATED BY7f70b30f2cc6 11 days ago /bin/sh -c #(nop) CMD ["nginx" "-g" "…<missing> 11 days ago /bin/sh -c #(nop) STOPSIGNAL [SIGTERM]<missing> 11 days ago /bin/sh -c #(nop) EXPOSE 80/tcpcontainers/Container_Networking_Basics.md

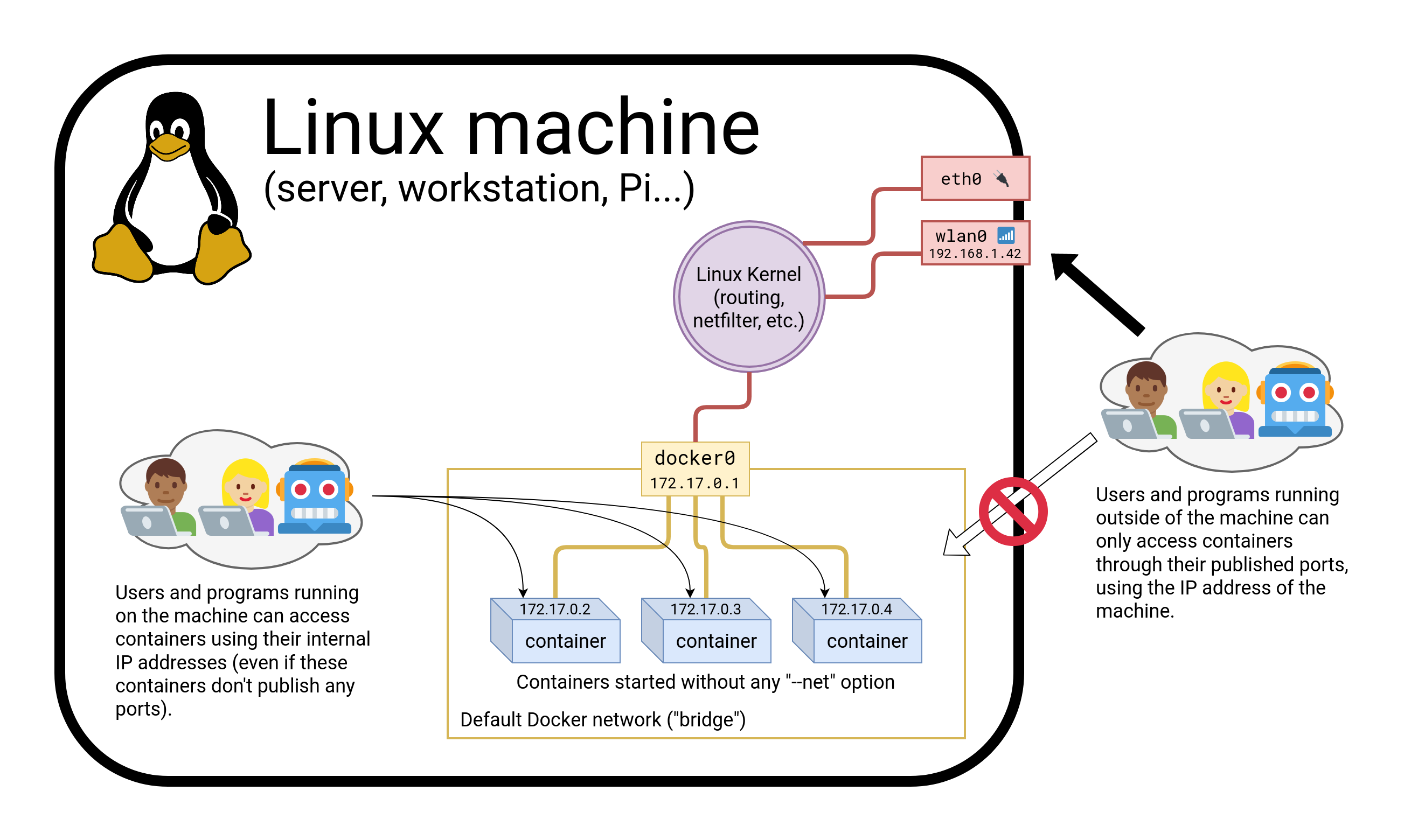

Why can't we just connect to port 80?

Our Docker host has only one port 80

Therefore, we can only have one container at a time on port 80

Therefore, if multiple containers want port 80, only one can get it

By default, containers do not get "their" port number, but a random one

(not "random" as "crypto random", but as "it depends on various factors")

We'll see later how to force a port number (including port 80!)

containers/Container_Networking_Basics.md

Using multiple IP addresses

Hey, my network-fu is strong, and I have questions...

Can I publish one container on 127.0.0.2:80, and another on 127.0.0.3:80?

My machine has multiple (public) IP addresses, let's say A.A.A.A and B.B.B.B.

Can I have one container on A.A.A.A:80 and another on B.B.B.B:80?I have a whole IPV4 subnet, can I allocate it to my containers?

What about IPV6?

You can do all these things when running Docker directly on Linux.

(On other platforms, generally not, but there are some exceptions.)

containers/Container_Networking_Basics.md

Finding the web server port in a script

Parsing the output of docker ps would be painful.

There is a command to help us:

$ docker port <containerID> 800.0.0.0:12345containers/Container_Networking_Basics.md

Manual allocation of port numbers

If you want to set port numbers yourself, no problem:

$ docker run -d -p 80:80 nginx$ docker run -d -p 8000:80 nginx$ docker run -d -p 8080:80 -p 8888:80 nginx- We are running three NGINX web servers.

- The first one is exposed on port 80.

- The second one is exposed on port 8000.

- The third one is exposed on ports 8080 and 8888.

Note: the convention is port-on-host:port-on-container.

containers/Container_Networking_Basics.md

Plumbing containers into your infrastructure

There are many ways to integrate containers in your network.

Start the container, letting Docker allocate a public port for it.

Then retrieve that port number and feed it to your configuration.Pick a fixed port number in advance, when you generate your configuration.

Then start your container by setting the port numbers manually.Use an orchestrator like Kubernetes or Swarm.

The orchestrator will provide its own networking facilities.

Orchestrators typically provide mechanisms to enable direct container-to-container communication across hosts, and publishing/load balancing for inbound traffic.

containers/Container_Networking_Basics.md

Finding the container's IP address

We can use the docker inspect command to find the IP address of the

container.

$ docker inspect --format '{{ .NetworkSettings.IPAddress }}' <yourContainerID>172.17.0.3docker inspectis an advanced command, that can retrieve a ton of information about our containers.Here, we provide it with a format string to extract exactly the private IP address of the container.

containers/Container_Networking_Basics.md

Pinging our container

Let's try to ping our container from another container.

docker run alpine ping <ipaddress>PING 172.17.0.X (172.17.0.X): 56 data bytes64 bytes from 172.17.0.X: seq=0 ttl=64 time=0.106 ms64 bytes from 172.17.0.X: seq=1 ttl=64 time=0.250 ms64 bytes from 172.17.0.X: seq=2 ttl=64 time=0.188 msWhen running on Linux, we can even ping that IP address directly!

(And connect to a container's ports even if they aren't published.)

containers/Container_Networking_Basics.md

How often do we use -p and -P ?

When running a stack of containers, we will often use Compose

Compose will take care of exposing containers

(through a

ports:section in thedocker-compose.ymlfile)It is, however, fairly common to use

docker run -Pfor a quick testOr

docker run -p ...when an image doesn'tEXPOSEa port correctly

containers/Container_Networking_Basics.md

Section summary

We've learned how to:

Expose a network port.

Connect to an application running in a container.

Find a container's IP address.

:EN:- Exposing single containers :FR:- Exposer un conteneur isolé

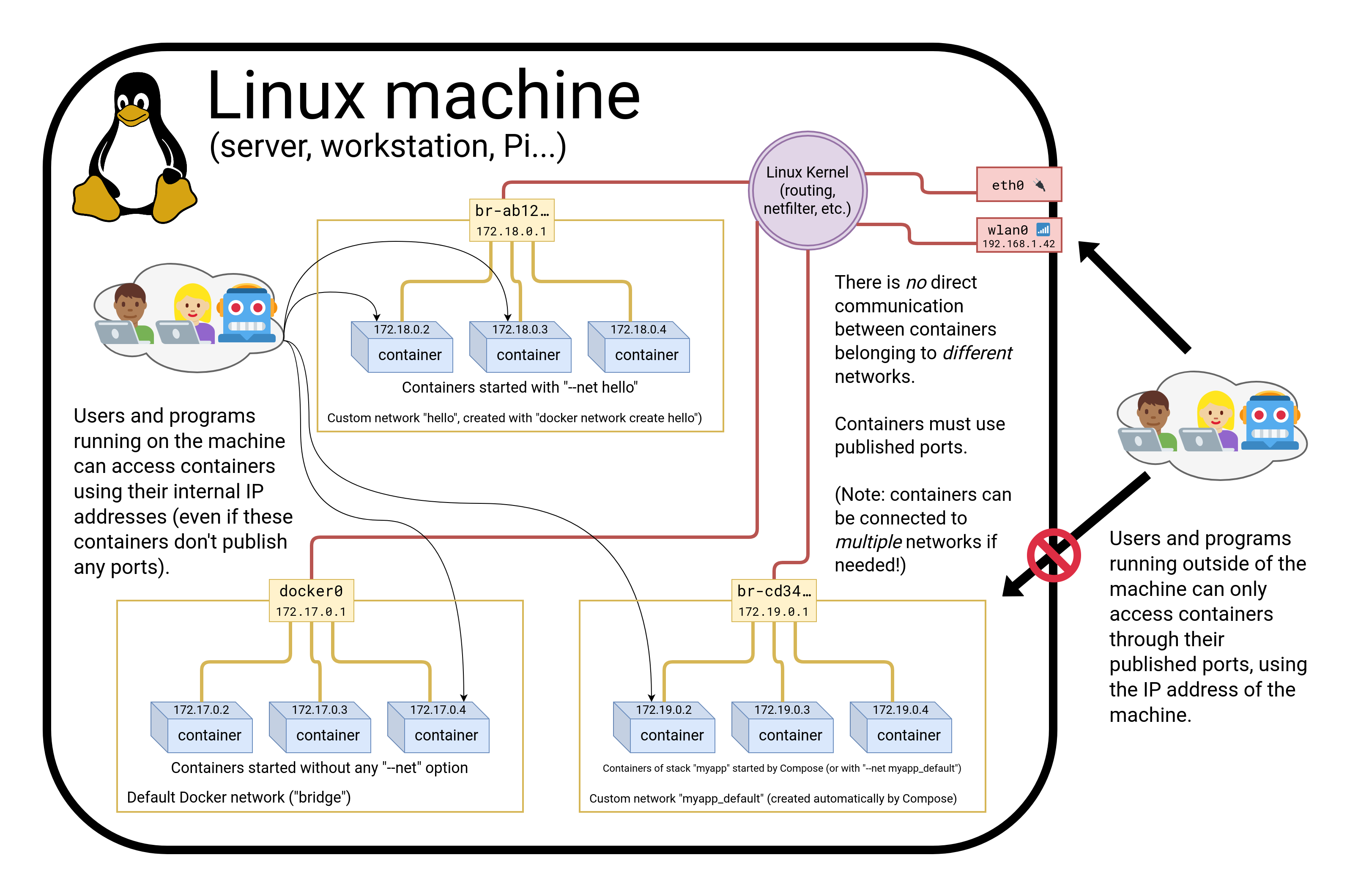

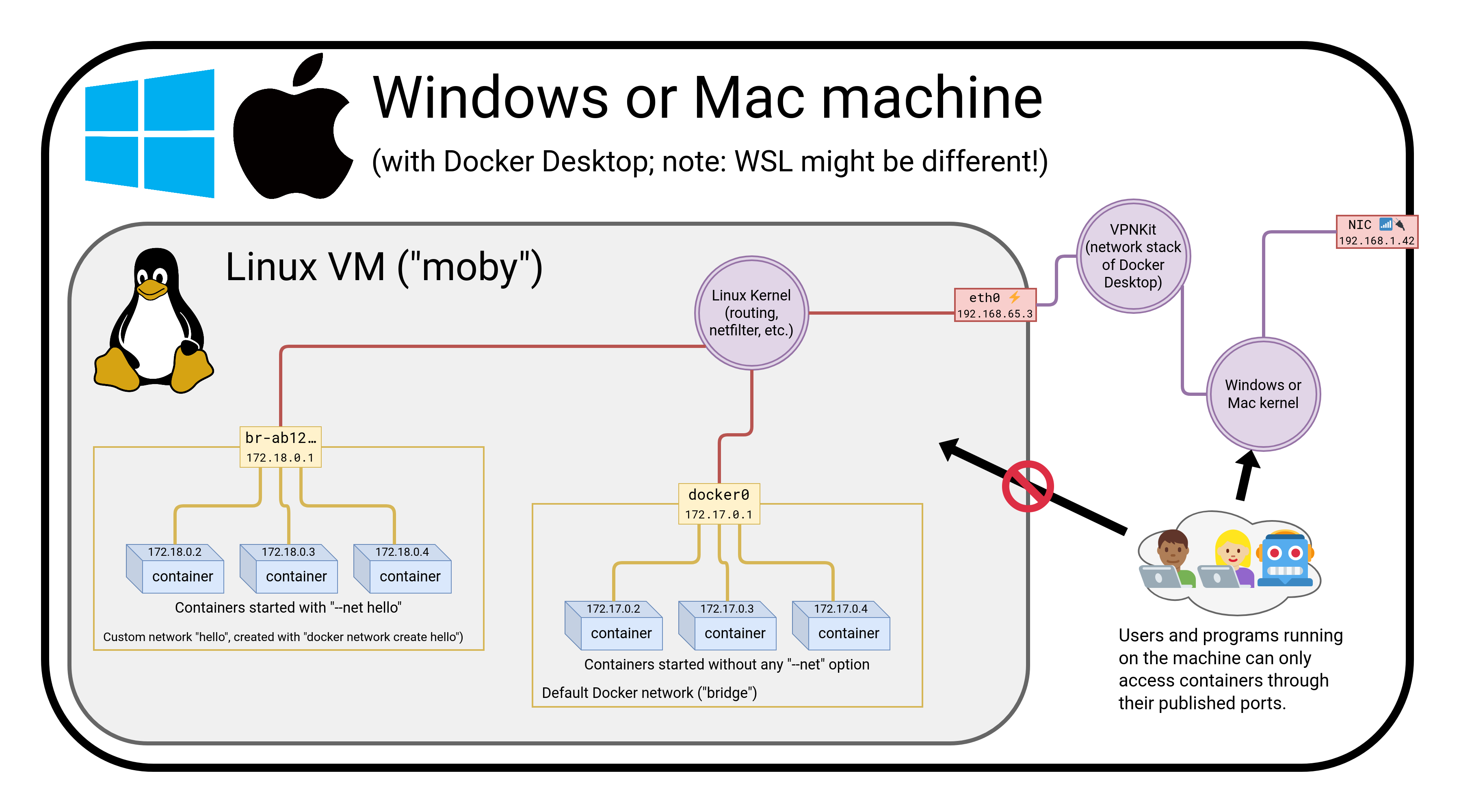

The Container Network Model

(automatically generated title slide)

Objectives

We will learn about the CNM (Container Network Model).

At the end of this lesson, you will be able to:

Create a private network for a group of containers.

Use container naming to connect services together.

Dynamically connect and disconnect containers to networks.

Set the IP address of a container.

We will also explain the principle of overlay networks and network plugins.

containers/Container_Network_Model.md

The Container Network Model

Docker has "networks".

We can manage them with the docker network commands; for instance:

$ docker network lsNETWORK ID NAME DRIVER6bde79dfcf70 bridge bridge8d9c78725538 none nulleb0eeab782f4 host host4c1ff84d6d3f blog-dev overlay228a4355d548 blog-prod overlayNew networks can be created (with docker network create).

(Note: networks none and host are special; let's set them aside for now.)

containers/Container_Network_Model.md

What's a network?

Conceptually, a Docker "network" is a virtual switch

(we can also think about it like a VLAN, or a WiFi SSID, for instance)

By default, containers are connected to a single network

(but they can be connected to zero, or many networks, even dynamically)

Each network has its own subnet (IP address range)

A network can be local (to a single Docker Engine) or global (span multiple hosts)

Containers can have network aliases providing DNS-based service discovery

(and each network has its own "domain", "zone", or "scope")

containers/Container_Network_Model.md

Service discovery

A container can be given a network alias

(e.g. with

docker run --net some-network --net-alias db ...)The containers running in the same network can resolve that network alias

(i.e. if they do a DNS lookup on

db, it will give the container's address)We can have a different

dbcontainer in each network(this avoids naming conflicts between different stacks)

When we name a container, it automatically adds the name as a network alias

(i.e.

docker run --name xyz ...is likedocker run --net-alias xyz ...

containers/Container_Network_Model.md

Network isolation

Networks are isolated

By default, containers in network A cannot reach those in network B

A container connected to both networks A and B can act as a router or proxy

Published ports are always reachable through the Docker host address

(

docker run -P ...makes a container port available to everyone)

containers/Container_Network_Model.md

How to use networks

We typically create one network per "stack" or app that we deploy

More complex apps or stacks might require multiple networks

(e.g.

frontend,backend, ...)Networks allow us to deploy multiple copies of the same stack

(e.g.

prod,dev,pr-442, ....)If we use Docker Compose, this is managed automatically for us

containers/Container_Network_Model.md

CNM vs CNI

CNM is the model used by Docker

Kubernetes uses a different model, architectured around CNI

(CNI is a kind of API between a container engine and CNI plugins)

Docker model:

- multiple isolated networks

- per-network service discovery

- network interconnection requires extra steps

Kubernetes model:

- single flat network

- per-namespace service discovery

- network isolation requires extra steps (Network Policies)

containers/Container_Network_Model.md

Creating a network

Let's create a network called dev.

$ docker network create dev4c1ff84d6d3f1733d3e233ee039cac276f425a9d5228a4355d54878293a889baThe network is now visible with the network ls command:

$ docker network lsNETWORK ID NAME DRIVER6bde79dfcf70 bridge bridge8d9c78725538 none nulleb0eeab782f4 host host4c1ff84d6d3f dev bridgecontainers/Container_Network_Model.md

Placing containers on a network

We will create a named container on this network.

It will be reachable with its name, es.

$ docker run -d --name es --net dev elasticsearch:28abb80e229ce8926c7223beb69699f5f34d6f1d438bfc5682db893e798046863containers/Container_Network_Model.md

Communication between containers

Now, create another container on this network.

$ docker run -ti --net dev alpine shroot@0ecccdfa45ef:/#From this new container, we can resolve and ping the other one, using its assigned name:

/ # ping esPING es (172.18.0.2) 56(84) bytes of data.64 bytes from es.dev (172.18.0.2): icmp_seq=1 ttl=64 time=0.221 ms64 bytes from es.dev (172.18.0.2): icmp_seq=2 ttl=64 time=0.114 ms64 bytes from es.dev (172.18.0.2): icmp_seq=3 ttl=64 time=0.114 ms^C--- es ping statistics ---3 packets transmitted, 3 received, 0% packet loss, time 2000msrtt min/avg/max/mdev = 0.114/0.149/0.221/0.052 msroot@0ecccdfa45ef:/#containers/Container_Network_Model.md

Resolving container addresses

Since Docker Engine 1.10, name resolution is implemented by a dynamic resolver.

Archeological note: when CNM was intoduced (in Docker Engine 1.9, November 2015)

name resolution was implemented with /etc/hosts, and it was updated each time

CONTAINERs were added/removed. This could cause interesting race conditions

since /etc/hosts was a bind-mount (and couldn't be updated atomically).

[root@0ecccdfa45ef /]# cat /etc/hosts172.18.0.3 0ecccdfa45ef127.0.0.1 localhost::1 localhost ip6-localhost ip6-loopbackfe00::0 ip6-localnetff00::0 ip6-mcastprefixff02::1 ip6-allnodesff02::2 ip6-allrouters172.18.0.2 es172.18.0.2 es.devcontainers/Container_Network_Model.md

Service discovery with containers

(automatically generated title slide)

Service discovery with containers

Let's try to run an application that requires two containers.

The first container is a web server.

The other one is a redis data store.

We will place them both on the

devnetwork created before.

containers/Container_Network_Model.md

Running the web server

The application is provided by the container image

jpetazzo/trainingwheels.We don't know much about it so we will try to run it and see what happens!

Start the container, exposing all its ports:

$ docker run --net dev -d -P jpetazzo/trainingwheelsCheck the port that has been allocated to it:

$ docker ps -lcontainers/Container_Network_Model.md

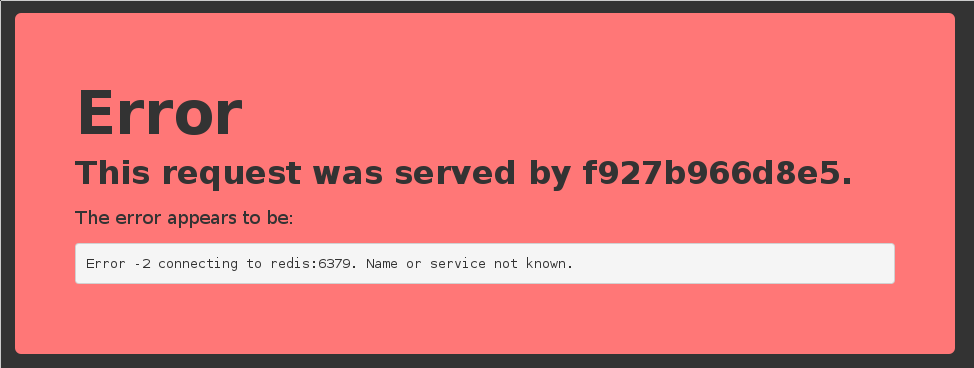

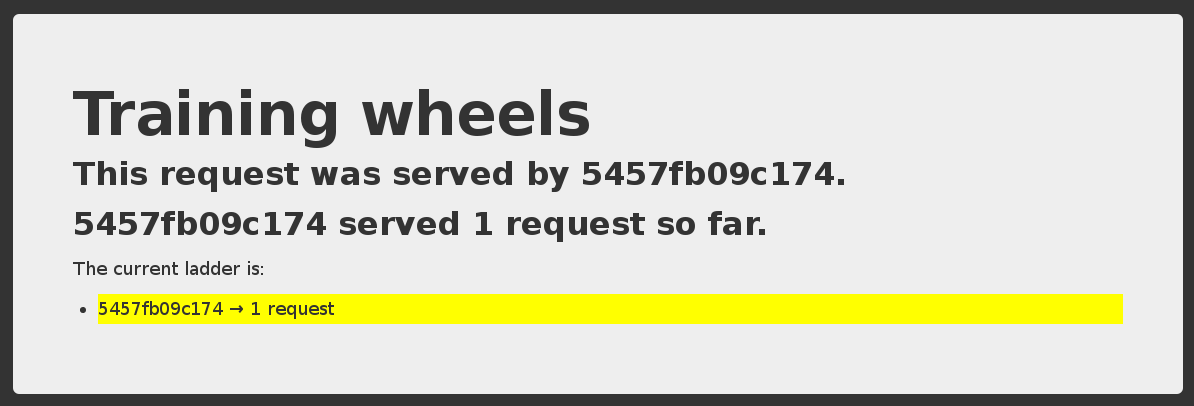

Test the web server

- If we connect to the application now, we will see an error page:

- This is because the Redis service is not running.

- This container tries to resolve the name

redis.

Note: we're not using a FQDN or an IP address here; just redis.

containers/Container_Network_Model.md

Start the data store

We need to start a Redis container.

That container must be on the same network as the web server.

It must have the right network alias (

redis) so the application can find it.

Start the container:

$ docker run --net dev --net-alias redis -d rediscontainers/Container_Network_Model.md

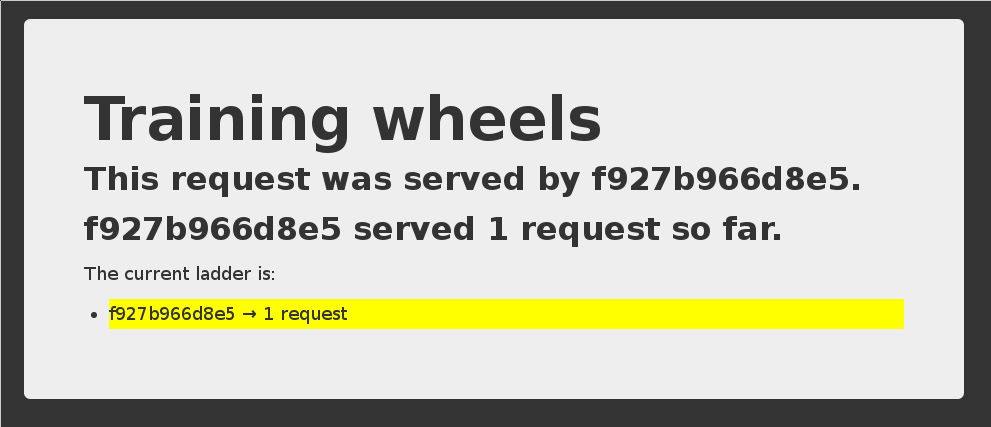

Test the web server again

- If we connect to the application now, we should see that the app is working correctly:

- When the app tries to resolve

redis, instead of getting a DNS error, it gets the IP address of our Redis container.

containers/Container_Network_Model.md

A few words on scope

Container names are unique (there can be only one

--name redis)Network aliases are not unique

We can have the same network alias in different networks:

docker run --net dev --net-alias redis ...docker run --net prod --net-alias redis ...We can even have multiple containers with the same alias in the same network

(in that case, we get multiple DNS entries, aka "DNS round robin")

containers/Container_Network_Model.md

Names are local to each network

Let's try to ping our es container from another container, when that other container is not on the dev network.

$ docker run --rm alpine ping esping: bad address 'es'Names can be resolved only when containers are on the same network.

Containers can contact each other only when they are on the same network (you can try to ping using the IP address to verify).

containers/Container_Network_Model.md

Network aliases

We would like to have another network, prod, with its own es container. But there can be only one container named es!

We will use network aliases.

A container can have multiple network aliases.

Network aliases are local to a given network (only exist in this network).

Multiple containers can have the same network alias (even on the same network).

Since Docker Engine 1.11, resolving a network alias yields the IP addresses of all containers holding this alias.

containers/Container_Network_Model.md

Creating containers on another network

Create the prod network.

$ docker network create prod5a41562fecf2d8f115bedc16865f7336232a04268bdf2bd816aecca01b68d50cWe can now create multiple containers with the es alias on the new prod network.

$ docker run -d --name prod-es-1 --net-alias es --net prod elasticsearch:238079d21caf0c5533a391700d9e9e920724e89200083df73211081c8a356d771$ docker run -d --name prod-es-2 --net-alias es --net prod elasticsearch:21820087a9c600f43159688050dcc164c298183e1d2e62d5694fd46b10ac3bc3dcontainers/Container_Network_Model.md

Resolving network aliases

Let's try DNS resolution first, using the nslookup tool that ships with the alpine image.

$ docker run --net prod --rm alpine nslookup es.Name: esAddress 1: 172.23.0.3 prod-es-2.prodAddress 2: 172.23.0.2 prod-es-1.prod(You can ignore the can't resolve '(null)' errors.)

containers/Container_Network_Model.md

Connecting to aliased containers

Each ElasticSearch instance has a name (generated when it is started). This name can be seen when we issue a simple HTTP request on the ElasticSearch API endpoint.

Try the following command a few times:

$ docker run --rm --net dev centos curl -s es:9200{ "name" : "Tarot",...}Then try it a few times by replacing --net dev with --net prod:

$ docker run --rm --net prod centos curl -s es:9200{ "name" : "The Symbiote",...}containers/Container_Network_Model.md

Good to know ...

Docker will not create network names and aliases on the default

bridgenetwork.Therefore, if you want to use those features, you have to create a custom network first.

Network aliases are not unique on a given network.

i.e., multiple containers can have the same alias on the same network.

In that scenario, the Docker DNS server will return multiple records.

(i.e. you will get DNS round robin out of the box.)Enabling Swarm Mode gives access to clustering and load balancing with IPVS.

Creation of networks and network aliases is generally automated with tools like Compose.

containers/Container_Network_Model.md

A few words about round robin DNS

Don't rely exclusively on round robin DNS to achieve load balancing.

Many factors can affect DNS resolution, and you might see:

- all traffic going to a single instance;

- traffic being split (unevenly) between some instances;

- different behavior depending on your application language;

- different behavior depending on your base distro;

- different behavior depending on other factors (sic).

It's OK to use DNS to discover available endpoints, but remember that you have to re-resolve every now and then to discover new endpoints.

containers/Container_Network_Model.md

Custom networks

When creating a network, extra options can be provided.

--internaldisables outbound traffic (the network won't have a default gateway).--gatewayindicates which address to use for the gateway (when outbound traffic is allowed).--subnet(in CIDR notation) indicates the subnet to use.--ip-range(in CIDR notation) indicates the subnet to allocate from.--aux-addressallows specifying a list of reserved addresses (which won't be allocated to containers).

containers/Container_Network_Model.md

Setting containers' IP address

- It is possible to set a container's address with

--ip. - The IP address has to be within the subnet used for the container.

A full example would look like this.

$ docker network create --subnet 10.66.0.0/16 pubnet42fb16ec412383db6289a3e39c3c0224f395d7f85bcb1859b279e7a564d4e135$ docker run --net pubnet --ip 10.66.66.66 -d nginxb2887adeb5578a01fd9c55c435cad56bbbe802350711d2743691f95743680b09Note: don't hard code container IP addresses in your code!

I repeat: don't hard code container IP addresses in your code!

containers/Container_Network_Model.md

Network drivers

A network is managed by a driver.

The built-in drivers include:

bridge(default)nonehostmacvlanoverlay(for Swarm clusters)

More drivers can be provided by plugins (OVS, VLAN...)

A network can have a custom IPAM (IP allocator).

containers/Container_Network_Model.md

Overlay networks

The features we've seen so far only work when all containers are on a single host.

If containers span multiple hosts, we need an overlay network to connect them together.

Docker ships with a default network plugin,

overlay, implementing an overlay network leveraging VXLAN, enabled with Swarm Mode.Other plugins (Weave, Calico...) can provide overlay networks as well.

Once you have an overlay network, all the features that we've used in this chapter work identically across multiple hosts.

containers/Container_Network_Model.md

Multi-host networking (overlay)

Out of the scope for this intro-level workshop!

Very short instructions:

- enable Swarm Mode (

docker swarm initthendocker swarm joinon other nodes) docker network create mynet --driver overlaydocker service create --network mynet myimage

If you want to learn more about Swarm mode, you can check this video or these slides.

containers/Container_Network_Model.md

Multi-host networking (plugins)

Out of the scope for this intro-level workshop!

General idea:

install the plugin (they often ship within containers)

run the plugin (if it's in a container, it will often require extra parameters; don't just

docker runit blindly!)some plugins require configuration or activation (creating a special file that tells Docker "use the plugin whose control socket is at the following location")

you can then

docker network create --driver pluginname

containers/Container_Network_Model.md

Connecting and disconnecting dynamically

So far, we have specified which network to use when starting the container.

The Docker Engine also allows connecting and disconnecting while the container is running.

This feature is exposed through the Docker API, and through two Docker CLI commands:

docker network connect <network> <container>docker network disconnect <network> <container>

containers/Container_Network_Model.md

Dynamically connecting to a network

We have a container named

esconnected to a network nameddev.Let's start a simple alpine container on the default network:

$ docker run -ti alpine sh/ #In this container, try to ping the

escontainer:/ # ping esping: bad address 'es'This doesn't work, but we will change that by connecting the container.

containers/Container_Network_Model.md

Finding the container ID and connecting it

Figure out the ID of our alpine container; here are two methods:

looking at

/etc/hostnamein the container,running

docker ps -lqon the host.

Run the following command on the host:

$ docker network connect dev <container_id>

containers/Container_Network_Model.md

Checking what we did